On average, corporations in regulated industries do not use between 73–95% of their data. The causes of this “wasted data” vary, but the most common reason is regulatory concerns over privacy. Currently, 137 out of 194 countries have or are in the process of enacting data privacy legislation – and those laws can vary wildly from region to region (for example, GDPR vs. CLOUD Act) and even within state to state. With all of the complexity involved in using data, organizations are seeking new ways to realize the full value of their data in a regulatory compliant manner.

After decades of extensive research into the varied privacy enhancing technology approaches, there is finally a way for businesses to move beyond just storing their data, to using it to make better, more informed decisions. Privacy Enhancing technologies (PETs) are technologies that enable businesses who are handling large volumes of data that are highly sensitive to utilize it to their greatest potential, without sacrificing privacy or protection. They are, by design, engineered to minimize sensitive data exposure and maximize data security, and are also engineered to allow that data to be analyzed and used while it is fully or partially encrypted.

Privacy Enhancing Technologies allow businesses the ability to collaborate on data and maximize its value without compromising the privacy of customers, clients, and intellectual property. PETs also support decentralized data analysis where data doesn’t leave its jurisdiction of origin, an important capability when data localization laws apply, e.g. in the European Union (GDPR).

Advantages of Privacy Enhancing Technologies

The advantages of Privacy Enhancing Technologies include the ability to: analyze disparate sets of data, train and tune models on encrypted data, allow multiple users to conduct secure calculations on pooled or aggregated data, and much more. Which capabilities are available depends not only on the expertise of the team deploying Privacy Enhancing Solutions on their systems, but also on which PETs they choose to use.

What are the Different Types of Privacy Enhancing Solutions?

- Fully Homomorphic Encryption – a form of encryption that enables computations on encrypted data, without decrypting it

- Multiparty Computation – uses advanced cryptography which allows multiple parties to compute over their combined data while keeping their inputs private

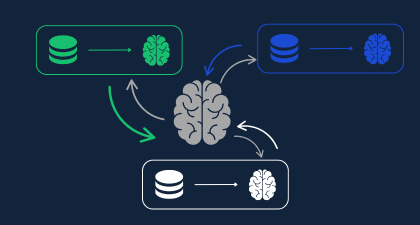

- Federated Learning – allows for a machine learning model to be trained over differing sets of data without the data being decrypted

Individually, each of these Privacy Enhancing Solutions provides organizations with specific tools to realize the full value of their data. When combined, PETs complement each other to not only enhance the value of data, but also to eliminate points of failure in an organizations’ data processing structure.

| Federated Learning | Secure Multiparty Computation | Homomorphic Encryption | |

| Definition | Allows parties to share insights from analysis on individual data sets, without sharing data itself. | Allows parties to perform a joint computation on individual inputs without having to reveal underlying data to each other. | Data and/or models encrypted at rest, in transit, and in use (ensuring sensitive data never needs to be decrypted), but still enables analysis of that data. Can be combined with other methods, like SMPC, to offer hybrid approaches. |

| Typical Use Case | Learning from user inputs on mobile devices (e.g., spelling errors and auto-completing words) to train a model. | Benchmarking between collaborating parties where aggregated output is adequate. | Analysis of sensitive data where flexibility around computation is desired, and regulatory compliance, precision, and security are necessary |

| Drawbacks | -Complexity of managing distributed systems

-Sharing aggregated insights may expose unwanted information -Large scale data needed to glean valuable insights -Model parameters known by collaborating parties | -Output is known by all parties and can therefore be used to infer sensitive data

-Each deployment requires a completely custom set up, making it complex to implement -Typically requires intensive communication between parties, driving high costs | – Best for batch or “human scale” computations |

How can PETs be combined to benefit businesses? It depends on the use case. We’ll explore two distinct examples below.

Combining Federated Learning with Homomorphic Encryption

Federated Learning is a PET which allows parties to share insights from analysis on individual data sets, without sharing data itself. Many organizations who use machine learning and artificial intelligence to build models use federated learning when compiling decentralized data.

For example, a major transportation conglomerate wishes to optimize its bus routes nationwide. GPS data from the company’s fleet are stored in several data centers hundreds of miles apart. Due to the way that the data is stored, compiling all the data in one central database is both costly and inadvisable. Moving the data from multiple data centers would either involve manual labor – which is costly and slow – or moving the data to a cloud provider, which places security for the data in the hands of a third-party provider.

Federated learning requires all participating organizations to pre-determine which types of analyses will be performed on the combined datasets. This makes it rigid and very challenging to adopt new analyses. Additionally, a third party could analyze updates to the model to make inferences about the underlying data, thereby putting the data at risk. Finally, aggregating the results leads to loss of precision and accuracy, undermining the goal of the machine learning project.

Combining Homomorphic Encryption with Federated Learning not only allows machine learning a greater level of security; it provides enhanced accuracy and makes the most of available data. By encrypting the data and encrypting the results, nothing can be inferred about the model or the data. Only permissioned parties are able to decrypt the results.

Combining Federated Learning with Multiparty Computation and Homomorphic Encryption

What happens when there is especially sensitive data being computed, and multiple parties are collaborating to create a shared model on that data?

In cases like these, combining federated learning and homomorphic encryption is not enough. By adding multiparty computation, not only is all data encrypted, all models are built on encrypted data and all parties must agree to access any results. This protects data even if a participant is fully compromised. The distributed nature of the multi-party computation also protects against denial-of-service attacks. This way, it is impossible for an infiltrator to learn anything about the data, the model, or the results of training that model and reduces the ability of external actors to prevent the use of the service.

In practical terms, there are a few industries which are at the forefront of combining Federated Learning, Fully Homomorphic Encryption, and Multiparty Computing:

- Fighting fraud: Any given bank has access to 15-25% of their customers’ financial information. The additional information exists with a number of external financial institutions. Privacy Enhancing Technologies can be used together to build a complete customer profile. By collaborating with other banks, fraud prevention officers can pool data and then train a model to analyze that data while it is still encrypted to predict which types of fraud are the most common in their country or which accounts flagged for fraud are likely to perform suspicious activity again.

- Cyber Threat Intelligence Sharing: Organizations can share protected network trace data and develop models of insider cyber-attacks using the network trace data. This would allow the models to better prevent insider cyber attacks in the future.

- Genome-Wide Association Studies (GWAS) – Data collaboration between multiple medical institutions makes it possible to train a model to predict the likelihood of a person developing certain kinds of cancers based on their genes, or how they will react to a specific strain of COVID-19. Some of this research is already being done today.

Interested in learning more about PETs in action? Sign up for our upcoming webinar, “Revolutionizing the Data Sharing Landscape: Data Sharing in the Privacy Age.”