Data analysis is a central part of business operations today as it helps organizations save cost and generate new revenue, in many cases by gaining insight into customer preferences and maximizing returns by customizing their offerings. However, some of the data that is held by businesses is sensitive with potential to compromise user privacy and security. As a result, several regulations such as the General Data Protection Regulation (GDPR), the Health Insurance Portability and Accountability Act of 1996 (HIPAA), and the California Consumer Privacy Act (CCPA) have been developed.

Data anonymization is a method commonly employed by businesses to enable the use of the information they have without comprising user privacy and security. In this blog, we will examine data anonymization as an approach, its drawbacks, and its advantages.

What is Data Anonymization?

Data anonymization is the process of removing or hashing various data points that link a particular piece of data to an individual. This process lets organizations store and exchange customer data that can be used for purposes such as analytics, visualization, or sharing with third parties without revealing any connection of the data to a particular person.

Data anonymization usually retains as much data as possible, and the anonymized data tends to resemble the original dataset yet with less granularity. For example, if your organization gathers full DOB (mm/dd/yyyy), it can be anonymized by hiding the month and day and retaining only the year, thereby not exposing the personally identifiable information (PII).

Data Anonymization Techniques

Here are some of the most common data anonymization techniques employed today.

Data masking

Data masking involves creating a fake, but structurally similar version of your data. This is accomplished through modification techniques such as shuffling, simple word or character substitution, encryption, or masking out certain data. For example, the letter “R” can be masked as “L” through substitution masking, or credit card numbers masked out as “**** **** **** 7598.”

Pseudonymization

Pseudonymization is the process of removing identifiers from a data set and replacing them with a pseudonym. The main aim of this anonymization technique is to ensure that particular data can’t be matched to an identifiable person unless it is combined with a separate set of information.

A simple method of pseudonymizing data is substituting a person’s name with a fake name (a pseudonym.) For example, if a user submits the name “Jane” during registration, your main database can simply store it as “Person 2647.” The algorithm mapping Person 2647 to Jane can then be stored in another secure database.

Generalization

Generalization is the process of removing more specific aspects of data to reduce its identifiability. This is essentially like zooming out, where you hide the finer details but still maintain a high level of accuracy that can be used for analysis. For example, if you have a data set that states the age of each person, it could be generalized using categories such as 21 to 25 and 26 to 30. You can also generalize an address by removing the house and block number while retaining the street name, city, or zip code.

Data swapping

Data swapping is a simple method of anonymization that involves switching attributes in a certain column of data with others in the same column. This means that you will end up with a shuffled database that does not disclose any specific information about any natural person at the end of the process.

Assume that you have the database below.

| First Name | Last Name | D.O.B | City |

| John | Maxwell | 12/4/1985 | London |

| Claire | Cook | 3/7/1994 | New York |

| Matt | Jansen | 5/10/1991 | Amsterdam |

| Susan | Clark | 17/11/1989 | Stockholm |

The data can be swapped as shown below to create anonymity.

| First Name | Last Name | D.O.B | City |

| Matt | Clark | 5/10/1991 | London |

| Claire | Maxwell | 12/4/1985 | Amsterdam |

| Susan | Cook | 17/11/1989 | Stockholm |

| John | Jansen | 3/7/1994 | New York |

The Pros & Cons of Data Anonymization

There are many advantages derived from data anonymization which mostly center on privacy.

Advantages of data anonymization

Prevents data misuse

According to the 2021 Verizon Data Breach Investigations Report, insiders are responsible for around 22% of security incidents. Data anonymization helps prevent unintentional misuse or exposure by users authorized to access sensitive data.

Easy to implement

Anonymization mostly uses simple algorithms to swap, generalize, pseudonymize, or mask particular data. This makes the process cost effective, fast, and easy.

Acts as a damage control measure

No system is 100% foolproof, so you always need to prepare for possible infiltration. But in such a case, data anonymization can help protect sensitive data from compromise as the data wouldn’t make much sense to the attacker. The process also helps curtail data loss damages in a database breach.

Regulatory compliance

The European Union’s GDPR requires that data of individuals living in the EU undergo pseudonymization/anonymization. From there, the data is no longer classified as personal data, and it can be used for broader purposes without breaching compliance regulations.

Enhances business performance

Since anonymized data can be analyzed and used without breaching compliance standards, businesses can use the data to get insights into their customers and offer better and improved services.

Protects business and brand reputation

Data anonymization is part of the larger duty of an organization to protect sensitive, personal, and confidential data The loss or breach of this information can lead to a possible loss of trust and market share.

Disadvantages of Data Anonymization

Less accurate analysis

Reducing the granularity of data stored and analyzed, results in less meaningful information and less accurate insights.

Doesn’t maintain data relationships

Data anonymization reduces the granularity and the accuracy of the data, hence in some cases scrambles the relationships between data points. The relationships that are lost are critical for any artificial intelligence or data science activity. Therefore, anonymized data is limited in the utility that can be derived from it.

Only useful for aggregate data

Data anonymization is only useful if you need to summarize aggregate data, the goal of these types of methods is to perform statistics on the data sets. The technique cannot be used to analyze individual record-level data, in which the personally identifiable data is highly relevant to the analysis. In other cases, like in health research, this means that if analysis reveals that a specific subject is at high risk for a fatal disease, there is no way to identify that individual to alert them of findings and get this critical information to the individual whose health is at risk. Data anonymization also renders the data useless for the personalization of targeted offers, as the ability to connect insights with an individual has been destroyed.

Privacy risk remains

Most forms of data anonymization can be reverse engineered by acquiring an external data set. For example, in the case of pseudonymization, if an insider already has access to pseudonymized data, they would only need to gain access to the pseudonym database to de-anonymize the entire data set. In a recent case, a newspaper purchased anonymized Grindr user data from a 3rd party broker and device ID and location data to re-identify the account as having belonged to a local priest. The newspaper published the information and the priest subsequently resigned. Although the risk is reduced, it is far too easy to re-identify anonymized data.

Data cannot be linked across multiple data sources

In cases where one would like to link data on a record level across multiple databases, for example, combining patient data from a genomic database, a clinical database, and a wearable database. Or in a fintech setting linking data on individuals for banks, telcos, and insurance cannot be done, and the key on which the records are linked is exactly the identifiers these techniques eliminate.

Control over data usage in collaboration settings

Anonymization techniques do not allow the data owners to have any control over what is done with the data once anonymized and transferred to a third party. Once the 3rd party receives the anonymized data it can use it in many ways, including to re-identify the data, like what happened in the famous Netflix data de-anonymization scandal.

Data Anonymization Techniques – Pros and Cons

The main benefits of data anonymization are that it is an easy, inexpensive way to protect privacy when performing analysis on aggregated or individual data. However, in most cases, the shortcomings far outweigh the benefits. Data anonymization produces less accurate results and does not allow for data linkage. It’s also not very secure and re-identification is easily achieved. Neither does it allow any control over how the data and models are used, or protection of the data and model IP. Yet, perhaps the most challenging aspect of data anonymization comes when one wants to collaborate with 3rd parties. Data cannot be linked across multiple databases when it’s anonymized. The same goes for cases when one aggregates anonymized data, you cannot remove deduplications and create biased data sets.

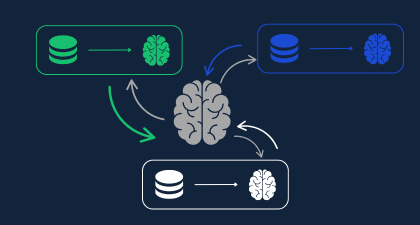

Data anonymization techniques are mentioned explicitly as a required or accepted technique by many data privacy regulations, but that doesn’t mean they are secure, it really depends on the type of analysis and utility one is aiming to gain. The selection of privacy enhancing tools and technologies needs to be considered on a case-by-case basis, but data anonymization should be used very cautiously as it has been proven to be fairly easy to breach. Data-driven enterprises looking for ways to derive more value from data require a holistic privacy preserving data collaboration platform that allows flexible selection and combination of multiple privacy enhancing technologies (PETs) as needed across the organization and data sources.

See how enterprises are using Duality in our Demo Library.