In 2026, secure data sharing goes beyond encrypting files and setting access permissions. Organizations in regulated fields like finance, healthcare, and AI face a deeper challenge: enabling collaboration while maintaining control over sensitive data.

The difficulty lies in managing what happens after access is granted, how data is queried, combined, and reused. Most legacy security was not designed for this complexity. The underlying problem explains why modern secure data sharing looks fundamentally different.

Core Reality

Secure data sharing is not about moving data safely.

It is about controlling what happens after access is granted.

The Core Challenge of Data Sharing

Data sharing creates a structural tension that can only be managed, not eliminated. Data becomes valuable only when used, yet every use introduces risk. The moment data moves between teams, organizations, or systems, you lose some degree of control.

This tension grows in modern environments where:

- The same dataset may be used for analytics, operations, and AI training.

- Multiple parties require access at the same time.

- Regulations apply different constraints to the same data depending on the context.

The core issue is the unpredictable nature of data usage once access is granted. Data can be copied, joined with other datasets, or queried in ways that reveal more than intended. Most systems operate as if granting access is the final step, when it is really the beginning of the risk lifecycle.

Why Data Sharing Is Harder in 2026

| Challenge | Why It Matters |

| Multi-use datasets | Same data used for AI, analytics, ops → conflicting constraints |

| Multi-party access | More users = more risk surfaces |

| Regulatory overlap | Different rules for same dataset |

| Unpredictable usage | Queries reveal more than intended |

Why Sensitive Data Changes the Architecture

As soon as data becomes sensitive such as personal information, financial records, health data, the architecture must adapt.

You can no longer assume:

- The infrastructure is fully trusted.

- Users will behave as intended.

- Outputs are harmless if inputs are protected.

Instead, systems must enforce constraints at multiple levels. Data often needs to stay in specific jurisdictions. Computation must be restricted, and outputs must be controlled to prevent inference. This shifts secure data sharing from simple pipelines toward policy-driven systems.

The architecture must continuously answer questions like:

- Who is accessing this data right now?

- Under what conditions?

- For what purpose?

- What can they actually extract from it?

If your system cannot answer these questions in real time, it lacks true security.

The Hardest Case: Air-Gapped and Restricted Data

The most extreme version of this problem occurs in air-gapped environments; systems with no external connectivity. These exist in defense, critical infrastructure, and highly sensitive research.

Here, the concept of “data sharing” itself breaks down. With no APIs, external queries, or cloud collaboration, a different model is needed. Instead of sharing data, organizations share controlled interactions with it.

This often takes the form of:

- Moving code or models into the environment rather than exporting data.

- Using tightly controlled export/import pipelines.

- Running computations inside isolated enclaves.

The absolute constraint is that data cannot leave freely. Every interaction must be deliberate, auditable, and controlled. This is the clearest example of where the industry is heading, even in less restrictive environments.

What secure data sharing solutions are suitable for government contractors with high-security requirements?

Government contractors require solutions with strict data sovereignty controls, air-gapped or isolated environments, and hardware-backed security like TEEs. Zero-trust architectures and policy-enforced access are essential, along with full compliance with national security standards.

From Data Sharing to Secure Data Sharing

Traditional data sharing focuses on movement; transferring data from one system to another. Secure data sharing focuses on control, allowing usage without surrendering ownership.

That shift introduces new requirements. Access must be revocable, usage must be observable, and outputs must be bounded. Policies must be enforced dynamically, not just configured once.

This is why modern approaches increasingly rely on:

- Confidential computing

- Privacy-enhancing technologies

- Zero-trust principles

The goal is to govern how data is used throughout its entire lifecycle.

Why Traditional Data Sharing Models Fail

Legacy approaches fail because they assume a level of trust that no longer exists.

Bulk data exports, for example, are still common. Data is copied into a partner’s environment, effectively removing it from the owner’s control. Contracts may exist, but enforcement is weak.

Centralized data lakes present a different problem. Aggregating everything into one environment creates a massive blast radius. A single breach can expose entire datasets.

Static anonymization is often treated as a solution, but it rarely holds up. With enough auxiliary data, re-identification is possible. More importantly, anonymization does nothing to prevent the misuse of the data itself.

The common thread is that these models apply security before sharing, rather than enforcing it continuously during use.

From Principles to Practice: Applying Secure Data Sharing in 2026

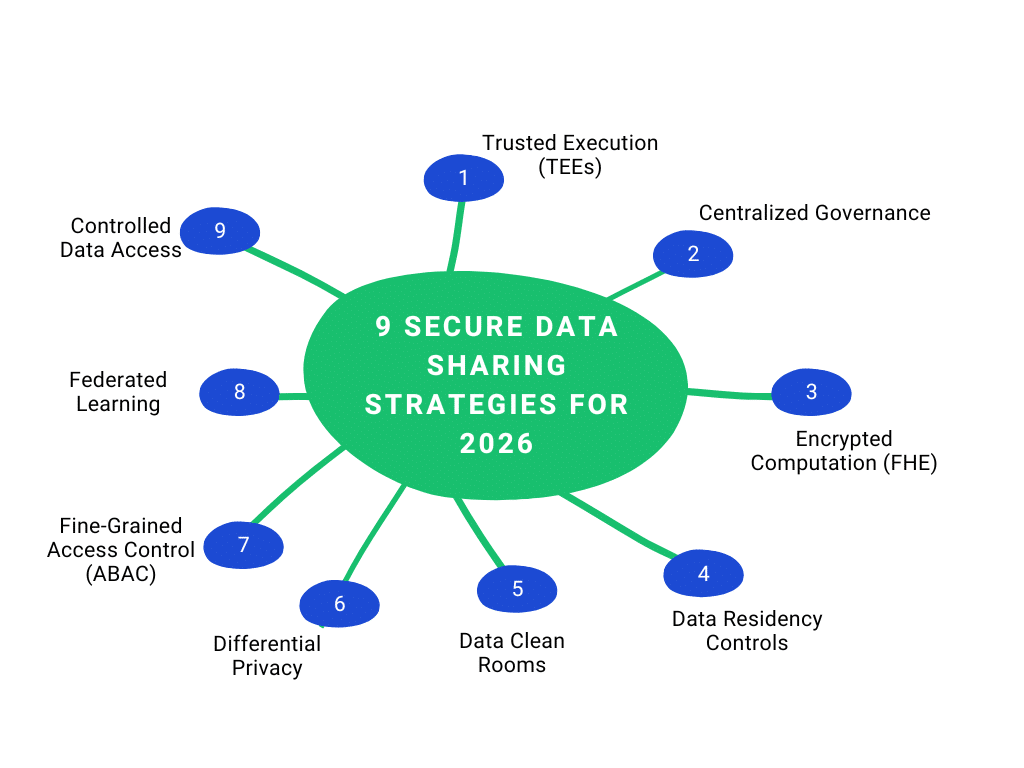

To overcome these limitations, organizations are adopting architectural strategies that prioritize control over movement. These strategies are not interchangeable; each addresses a specific failure in traditional systems. While individually useful, their real value comes from being combined.

Data Strategy 1. Shift from Data Movement to Controlled Data Access

The most important conceptual shift is to stop copying data. Instead of exporting datasets, organizations expose controlled access mechanisms. A partner receives the ability to query data under strict conditions, not the data itself.

In practice, this might be a secure query interface where only certain fields are visible, sensitive columns are masked, and queries are restricted. APIs can enforce similar limits on who can access data and how. This approach keeps the data in its original environment, where policies can be enforced consistently, access can be revoked instantly, and every interaction can be logged.

Where teams run into trouble is by undermining this model with convenience features like bulk exports or unrestricted queries. The moment data can be freely extracted, the system’s risks revert to those of traditional sharing.

Data Strategy 2. Use Trusted Execution Environments (TEEs) for Sensitive Processing

Even if data stays in place, external computation is sometimes needed. This raises a new question: can you trust the environment performing that computation?

Trusted Execution Environments (TEEs) address this by isolating processing at the hardware level. Data is decrypted only inside a protected enclave, shielding it even from the host system. This creates a strong boundary for sensitive computation without exposing raw data. TEEs are often used when multiple parties, such as banks collaborating on fraud detection, need to work together without full trust.

However, TEEs have drawbacks. They add performance overhead and operational complexity. They also shift trust to the code running inside the enclave and the hardware, rather than eliminating it.

Data Strategy 3. Apply Federated Learning for Distributed AI Training

AI has intensified the need for secure data sharing. Training effective models requires data from multiple sources, but centralizing it is often infeasible. Federated learning offers a solution by bringing the model to the data. Each participant trains a model locally and shares only the updates.

This reduces exposure significantly but does not eliminate risk. Model updates can still leak information, and malicious participants can try to manipulate the model. To be viable in regulated environments, federated learning must be combined with techniques like secure aggregation and differential privacy. On its own, it is a collaboration tool, not a complete security solution.

How does secure data sharing support compliance in AI model training?

Secure data sharing allows organizations to train AI models without centralizing sensitive datasets. Techniques like federated learning and differential privacy ensure that data remains local and protected, while still contributing to model development. This reduces regulatory exposure and aligns with data minimization principles.

Data Strategy 4. Enforce Fine-Grained Access Control (ABAC over RBAC)

Access control is often treated as a solved problem, but most implementations are too coarse. Role-Based Access Control (RBAC) assigns permissions by role, which is often insufficient. A role like “analyst” may grant far more access than necessary.

Attribute-Based Access Control (ABAC) adds context. Access decisions can incorporate factors like user identity, the data being accessed, request origin, and purpose. This allows for policies that align with regulations, such as purpose limitation or geographic restrictions, and reduces insider risk by narrowing access.

The trade-off is complexity. Policies are harder to manage, and misconfigurations can create new risks. Without strong governance, ABAC can become as problematic as the systems it replaces.

Data Strategy 5. Use Data Clean Rooms for Cross-Organization Collaboration

When multiple organizations need to analyze data together, clean rooms provide a controlled environment. Instead of exchanging datasets, each party contributes data to a shared space where queries are tightly controlled. Analysts receive aggregated or privacy-filtered results instead of raw data.

This model is effective for repeatable collaboration, like fraud detection across banks or joint healthcare research. However, clean rooms impose constraints. Analysts lose flexibility, making exploratory analysis more difficult. They work best when use cases are clearly defined in advance.

Data Strategy 6. Implement Homomorphic Encryption for Computation on Encrypted Data

Homomorphic Encryption (FHE) is one of the strongest forms of data protection, allowing computation directly on encrypted data so the underlying information is never exposed. In theory, this removes the need to trust the compute environment. In practice, it has significant performance costs and limited flexibility.

As a result, FHE is currently used only in highly sensitive scenarios where confidentiality outweighs efficiency. It remains a specialized tool, not a general solution.

Data Strategy 7. Enforce Data Residency and Sovereignty Controls

As data sharing becomes global, regulatory constraints grow. Data residency rules dictate where data can be stored and processed. These are not just storage constraints—processing location matters just as much.

Organizations must implement controls to ensure data does not cross jurisdictional boundaries. This often involves region-specific infrastructure and policy-driven access controls. Complexity increases in distributed systems, where analytics or AI workflows can span multiple regions. Without strict enforcement, it is easy to violate regulations unintentionally.

How can enterprises ensure data residency and regulatory compliance through secure data sharing?

Enterprises should implement geo-fencing, regional data storage, and jurisdiction-aware access policies. Data should not leave approved regions, and processing should occur within compliant environments. Policy engines must enforce these rules dynamically.

Data Strategy 8. Apply Differential Privacy for Output Protection

Even when raw data is protected, outputs can reveal sensitive information. Differential privacy addresses this by adding controlled noise to results, ensuring individual data points cannot be inferred. This is particularly important for analytics and AI systems where aggregated outputs are shared.

The challenge is balancing privacy and utility. Too much noise reduces usefulness, while too little weakens protection. Effective implementation requires careful tuning and an understanding of how the data is queried.

Data Strategy 9. Centralize Governance with Auditable Data Sharing Policies

All these strategies depend on governance to be effective. Organizations need to track who accessed what data, under what conditions, and for what purpose. This requires structured, enforceable policies and the ability to audit them continuously.

Without governance, even well-designed systems degrade. Policies drift, access expands, and visibility is lost. Secure data sharing is an ongoing process of enforcement and verification.

The Real Shift

Secure data sharing is no longer about protecting data before it’s shared.

It’s about enforcing control throughout its entire lifecycle.

Data Strategy Why These Strategies Must Be Layered

A common mistake is trying to select a single “best” approach. In reality, each strategy addresses a different dimension of risk. Access control governs who can interact with data. TEEs and encryption protect how data is processed. Clean rooms define collaboration boundaries. Differential privacy controls what can be inferred from outputs. Governance ensures everything is enforced.

These layers are complementary. Removing one creates gaps that others cannot fully cover. Mature architectures adopt a defense-in-depth approach, where multiple controls work together. The goal is resilience across the entire system, not perfection at any single layer.

Orchestration: Where Secure Data Sharing Succeeds or Fails

Implementing multiple controls is not enough; they must work together. Orchestration is the layer that ensures consistency. Policies defined in one place must be enforced everywhere across APIs, compute environments, and storage systems. Identity must be propagated reliably, and audit logs must be centralized.

Many implementations fail because the individual components are not integrated into a coherent system.

Buy vs. Build: Strategic Trade-offs

Organizations must decide how to acquire these capabilities. Building internally offers maximum control and allows systems to be tailored to specific needs, which is often necessary in highly regulated environments. However, building secure data sharing infrastructure is complex and requires significant expertise and maintenance.

Buying a platform accelerates deployment and provides access to mature capabilities. This is often the right choice for standard use cases like clean rooms. The trade-off is flexibility, as platforms may not align perfectly with internal requirements, and vendor reliance introduces its own risks.

In practice, most mature organizations adopt a hybrid approach, using platforms for core capabilities while building orchestration and policy layers internally.