The biggest hurdle for healthcare AI isn’t a lack of data, models, or good intentions. It’s access. The most valuable data for training powerful AI simply cannot be moved and centralized in the way traditional systems require.

Across the healthcare and life sciences ecosystem, the necessary datasets already exist, distributed among hospitals, research networks, and national health infrastructures. The goals are clear: improve diagnostics, speed up drug discovery, and unlock population-level health insights.

Yet, progress hits a wall the moment collaboration demands that data must be moved.

This is the fundamental flaw in most data-sharing architectures. They are built on an assumption of centralization, which in healthcare, creates a cascade of regulatory, operational, and institutional barriers that stop innovation in its tracks.

What’s changing is a fundamental shift in how we approach sensitive data, learning from it without ever sharing it.

The Status Quo: A Centralized Dream Meets a Distributed Reality

For the past decade, the prevailing wisdom in AI development has been “more data is better.” The standard playbook, born from the tech and e-commerce industries, was straightforward:

- Pool all data: Collect vast amounts of information from various sources.

- Centralize it: Store everything in a massive, unified data lake or cloud warehouse.

- Analyze and train: Run analytics and train machine learning models on this centralized repository.

This “centralize-and-conquer” model works beautifully when the data in question is customer clicks, supply chain logs, or social media posts. Early attempts in healthcare tried to follow the same script, relying on techniques like data anonymization and de-identification to create large, “safe” datasets for research.

But these efforts quickly revealed a critical flaw: what works for clicks doesn’t work for clinical trials. The data in healthcare is not only more sensitive, but it’s also richer and more complex. Simple anonymization techniques that strip out identifiers often degrade the data so much that it loses its scientific value. Worse, studies have shown that even de-identified data can often be re-identified, leaving organizations exposed to significant privacy and compliance risks.

This has led to a painful realization across the industry: the centralized model, for all its power in other sectors, is fundamentally incompatible with the reality of healthcare data.

Where the Centralized Model Breaks Down

The roadblocks in healthcare are structural and absolute. They can be broken down into three main categories of barriers that make centralization impractical, if not impossible.

- The Regulatory Wall: Modern data privacy laws are built around the principle of data sovereignty, the idea that data is subject to the laws of the country in which it was collected. Regulations like Europe’s GDPR, and a growing number of similar national laws, strictly govern or outright forbid the movement of sensitive health information across borders. This makes creating a centralized global health database a legal non-starter.

- The Commercial and IP Wall: In the life sciences, data is the product. A pharmaceutical company’s clinical trial data is one of its most valuable assets. A research hospital’s genomic data represents a core part of its intellectual property. Asking these organizations to upload their raw data to a central repository is like asking them to give away their crown jewels. It’s a non-starter from a business perspective.

- The Operational Wall: Even if the legal and commercial hurdles could be magically cleared, the practical challenges are immense. Hospitals use different electronic health record (EHR) systems with incompatible data formats. Legal teams spend months, sometimes years, negotiating complex Data Use Agreements (DUAs) for a single collaboration. The sheer cost and complexity of standardizing and physically moving petabytes of sensitive data are often prohibitive.

This is why so many promising AI initiatives stall. The traditional model of moving data first is fundamentally broken for healthcare. It forces organizations into an impossible choice: either risk non-compliance and loss of IP, or don’t collaborate at all.

A New Paradigm: From Data Sharing to Data Collaboration

Out of necessity, a more viable approach is taking hold. Instead of asking how to move data safely, leading organizations are asking how to generate insights from data where it already lives.

This shift flips the architecture of collaboration on its head. Computation is no longer centralized; it’s distributed to the data’s source. Each institution retains full control and security while still participating in powerful, shared analytical workflows.

This model enables a much richer set of use cases. Organizations can explore datasets, run analytics, and train models across institutional and national boundaries all without exposing a single raw data point.

What Are Privacy-Enhancing Technologies (PETs)?

Privacy-Enhancing Technologies (PETs) are the technical foundation for this new era of secure data collaboration. They are a suite of tools that allow data to be used for analysis and machine learning without ever exposing the underlying information.

Unlike traditional security that just restricts access, PETs enable computation directly on protected data. The core technologies include:

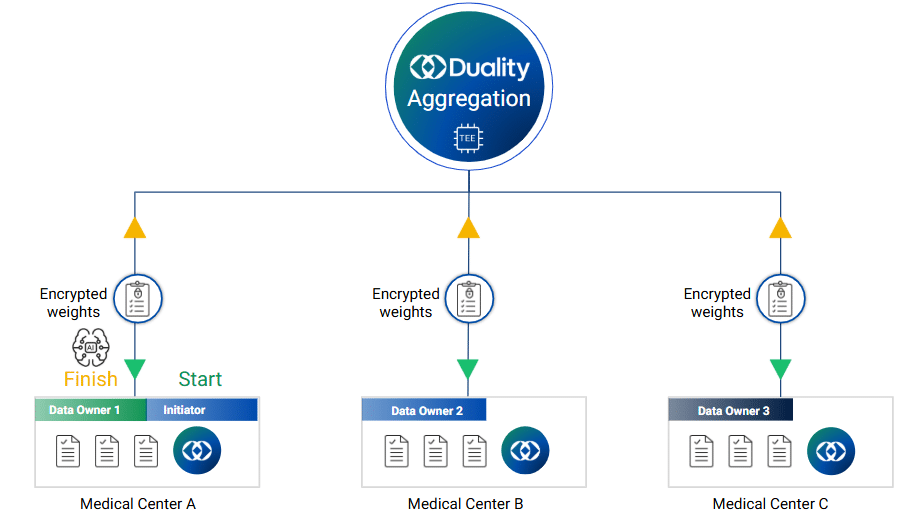

- Federated Learning Architecture: Enables training a shared AI model across multiple decentralized datasets without moving them.

- Differential Privacy: A mathematical guarantee that query outputs cannot be used to identify any single individual in the data.

- Trusted Execution Environments (TEEs): Secure hardware enclaves that isolate and protect data even while it’s being processed.

- Homomorphic Encryption: Allows for computation to be performed directly on encrypted data, making it the gold standard for data privacy.

Together, these technologies make secure collaboration on sensitive data a practical reality.

How PETs Drive Real-World Healthcare Collaboration

In the real world, collaboration is a multi-step process. PETs can be applied at each stage to ensure security without sacrificing results.

| Collaboration Stage | What Needs to Happen | PETs Involved |

| Data Discovery | Identify relevant datasets across institutions | Secure Querying, Homomorphic Encryption |

| Analytics | Generate insights locally from protected data | TEEs, Privacy-Preserving Computation |

| Model Training | Build powerful, shared predictive models | Federated Learning Architecture |

| Output Sharing | Aggregate and share results without risk | Differential Privacy |

This layered approach moves beyond simply training models; it creates a framework for continuous and secure interaction with distributed data.

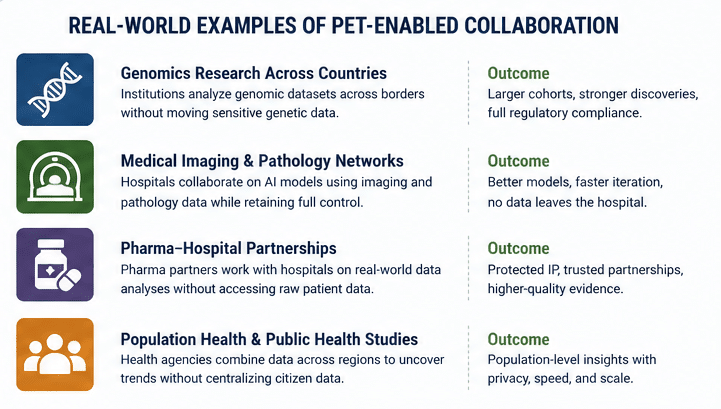

Real-World Use Case: Cross-Border Pediatric Cancer Research

The value of this approach becomes clearer when applied to a real collaboration problem.

In pediatric cancer research, one of the biggest challenges is scale. These are rare diseases by definition, which means no single institution, or even country, has enough data to generate meaningful insights on its own.

Over the last year the Department for Science, Innovation and Technology (DSIT) has piloted the use of novel Privacy Enhancing Technologies (PETs) to enable NHS England’s National Disease Registration Service (NDRS) and the US National Cancer Institute (NCI) to study ultra-rare childhood tumours, securely, lawfully and at speed: protecting patient privacy and keeping NHS data under NHS control.

The researchers across the UK and US needed to analyze sensitive patient datasets across borders, but strict regulatory, privacy, and security constraints made traditional data sharing effectively impossible.

Instead of trying to centralize the data, they changed the model.

Each institution kept its data within its own environment. Computation was distributed to each site, where analysis was performed locally. Only encrypted results were shared, aggregated securely inside trusted environments, and protected with differential privacy to prevent re-identification.

This changed what was operationally possible.

Researchers were able to execute over 450 federated queries across datasets in different countries, analyzing incidence, survival, and other key metrics without exposing raw data.

The impact was measurable:

- Time to insight dropped from roughly 23 months to about 2 months

- Cross-border compliance was maintained across HIPAA, GDPR, and UK standards

- Rare disease datasets were analyzed collectively without centralization

This is the difference between a collaboration that stalls and one that moves forward. Not because constraints disappeared, but because the architecture adapted to them.

Insight

The breakthrough wasn’t access to more data, it was the ability to use data that couldn’t be shared.

Putting Federated Learning and PETs into Practice

A federated learning architecture and PETs are no longer experimental. To make them work, however, requires designing workflows around the data’s constraints, not in spite of them.

Successful implementations start by keeping data exactly where it is and integrating computation into existing infrastructure. This avoids the friction of building new centralized systems and naturally aligns with data sovereignty regulations.

From there, orchestration is critical. Institutions need a coordinated way to manage how computations are run, models are updated, and results are governed. This includes defining clear, enforceable policies for the entire workflow.

The most effective deployments start with the collaboration goal and apply the right combination of PETs to solve specific constraints. It’s about building a unified system, not just using isolated tools.

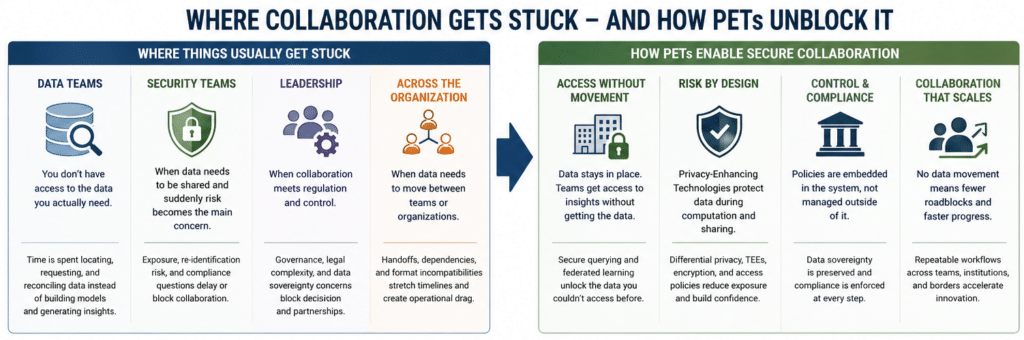

Why This Problem Looks Different Depending on Your Role

The point where collaboration breaks down is consistent, but how it shows up depends on where you sit in the organization.

- Data teams:

The issue is usually direct. The models are defined, the questions are clear, but the data needed to answer them sits in other systems, institutions, or partner environments. Access becomes the bottleneck. Instead of working on analysis or model development, teams spend time trying to locate, request, and reconcile datasets that never fully arrive. - Security and privacy teams:

The same moment looks different. The challenge is exposure. As soon as data needs to move between organizations, the risk profile changes. Questions around re-identification, data leakage, and compliance take priority. What begins as a collaboration initiative quickly becomes a risk management exercise. - Leadership:

The friction shows up as a governance problem. Collaboration often requires navigating regulatory frameworks, institutional policies, and questions of control. Even when there is alignment on the value of a project, progress slows when it becomes unclear how to proceed without introducing legal or operational risk. - Across the organization:

The pattern is simpler but just as limiting. Work slows down the moment data needs to move between teams or institutions. Dependencies increase, timelines stretch, and what should be a collaborative process turns into a series of handoffs.

These are not separate problems. They are different expressions of the same underlying constraint: collaboration depends on data that cannot easily be shared.

Building Scalable Collaboration in Healthcare Consortia

Scaling collaboration requires more than just technology; it requires aligning infrastructure, policy, and incentives across multiple institutions.

In a consortium, participants have different systems, priorities, and data standards. A federated, PET-based approach allows each organization to maintain its autonomy while contributing to a shared goal. By focusing on controlled computation rather than data access, each participant exposes only what is necessary.

This creates a powerful network effect. The long-term advantage of PET-based collaboration is it’s interoperability. As more institutions adopt compatible architectures, collaboration becomes exponentially easier to initiate and scale, which is why major healthcare initiatives are now investing in these models as core infrastructure.

The Deeper Shift: From Agreements to Enforced Trust

Traditional collaboration relies on legal agreements to define how data should be handled. These are necessary, but they don’t provide technical enforcement. PETs change this by embedding the rules directly into the system.

Data stays in place. Computation is constrained. Outputs are governed.

The system itself enforces the policy, reducing the need for implicit trust between organizations. For healthcare consortia, this is a game-changer. Collaboration can finally scale because it is technically controlled, not just contractually defined.

What To Do When You Need Data You Can’t Access

Most organizations recognize the problem long before they have a clear path forward. The pattern is familiar: the data exists, the use case is defined, but progress stops because access is blocked.

The next step is not to push harder on data sharing. It is to reframe how the collaboration is structured.

The first move is to identify where the constraint actually sits. In most cases, it is a combination of regulatory, security, and control requirements that make data movement impractical. Treating those constraints as fixed, not temporary obstacles, leads to better architectural decisions.

From there, the focus shifts to designing workflows that operate across data, rather than trying to consolidate it. This means defining what needs to be computed, where that computation should happen, and what outputs are actually required.

In practice, this often starts small. A limited analytical use case, a defined cohort, or a specific model can serve as the entry point. Instead of attempting to solve for full-scale data integration, organizations can validate collaboration using distributed approaches.

Technology selection follows the workflow, not the other way around. Federated learning architecture may be the right fit for model training, while earlier stages may rely more on secure querying or encrypted computation. The goal is not to deploy every PET, but to apply the right ones where constraints exist.

Equally important is governance. Collaboration needs clear rules around what can be computed, how results are shared, and how policies are enforced. The difference is that, with PETs, these rules can be embedded into the system itself.

Insight

Progress comes from changing the architecture of collaboration, not from forcing data into systems that were never designed for it.

Over time, this approach creates a repeatable model. What begins as a single constrained use case becomes a framework for broader collaboration, across teams, institutions, and eventually entire consortia.

Conclusion

For years, the promise of healthcare AI has been limited by a single constraint: the inability to use sensitive data across institutional and geographic boundaries.

Privacy-enhancing technologies, with federated learning as a core component, are finally removing that constraint. By enabling computation without data exposure, PETs allow organizations to collaborate without compromising security, compliance, or control.

The organizations that lead the next wave of healthcare innovation will not be those that centralize the most data, but those that can collaborate most effectively with the data they already have.