Confidential computing is a security technology that protects data while it is being processed – not only when it is stored or transmitted.

It allows organizations to run computations on sensitive or regulated information without exposing the underlying data or code by using hardware-based Trusted Execution Environments (TEEs), also known as secure enclaves.

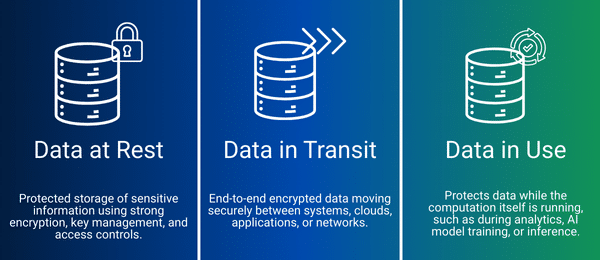

Unlike traditional security models that protect data at rest and in transit, confidential computing extends protection to data in use. This means that sensitive information remains encrypted and isolated even while it is being analyzed, processed, or used to train AI models.

In simple terms:

Confidential computing keeps data encrypted while it is being processed (data in use), enabling secure collaboration, analytics, and AI on highly sensitive datasets – without compromising privacy or compliance.

It complements existing protections for data at rest and in transit, ensuring end-to-end security across the entire data lifecycle.

By protecting data in use from infrastructure-level exposure, confidential computing enables regulated organizations to adopt cloud-scale analytics and AI securely and confidently.

Data at Rest

Protected storage of sensitive information using strong encryption, key management, and access controls.

Data in Transit

End-to-end encrypted data moving securely between systems, clouds, applications, or networks.

Data in Use

Protects data while the computation itself is running, such as during analytics, AI model training, or inference.

Modern organizations – especially in regulated sectors like government, defense, healthcare, and finance – process extremely sensitive data that cannot be exposed, shared, or accessed by unauthorized parties.

However, in traditional computing models, data becomes visible to system memory during processing, even if it is encrypted at rest and in transit.

This creates critical risks:

Confidential computing eliminates this exposure by ensuring that data and code remain isolated inside a hardware-protected enclave while computations run.

In practice, this matters because it enables organizations to:

As cyber threats evolve and AI adoption accelerates, confidential computing has become a foundational requirement for secure, privacy-preserving data processing.

Confidential computing relies on a Trusted Execution Environment (TEE), a secure enclave inside a processor where sensitive data can be processed safely.

The computation happens entirely within this protected space, keeping information secure from the operating system, cloud provider, and any unauthorized access.

The process works in five clear steps:

Attestation proves that the TEE is genuine and running the expected code. Local attestation verifies enclaves on the same platform, while remote attestation allows external parties to confirm the integrity of the TEE, which is especially important for cloud deployments.

Organizations adopt confidential computing to process sensitive, regulated, or proprietary data securely, while unlocking advanced analytics, AI, and collaborative opportunities.

By keeping data encrypted even while in use, confidential computing reduces risk, ensures compliance, and protects intellectual property.

Key reasons organizations implement confidential computing include:

Data remains secure throughout its lifecycle, including during active processing, preventing exposure to unauthorized users, insiders, or cloud operators.

Multiple organizations can work together on analytics or AI tasks without sharing raw data, enabling innovation while maintaining full confidentiality.

Even administrators, cloud staff, or malicious actors cannot access or manipulate information inside a TEE, reducing potential internal threats.

Supports adherence to strict frameworks such as GDPR, HIPAA, CJIS, and FedRAMP, lowering compliance risk when processing sensitive information.

Organizations can confidently run workloads on public or hybrid cloud infrastructure while maintaining control over encryption keys and data isolation.

Beyond data, confidential computing secures proprietary algorithms, business logic, analytics models, and AI workflows, preserving competitive advantage.

Workloads processed at the edge, closer to IoT devices or local servers, remain protected, enabling secure distributed computing and hybrid cloud strategies.

Several major cloud providers and hardware vendors support confidential computing, offering secure environments for processing sensitive data:

Azure leverages Intel SGX and AMD SEV-SNP hardware to provide confidential computing options for both virtual machines and containerized workloads. Customers can run sensitive applications while keeping data encrypted in use.

Amazon Web Services uses a virtualization-based TEE architecture to create isolated environments on EC2 instances. Nitro Enclaves enable secure key handling, cryptographic operations, and attestation.

Google provides confidential VMs built on AMD SEV technology, allowing organizations to protect data in use without major changes to their application code.

Confidential computing provides strong privacy protections, but there are a few trade-offs.

Deployments may require attestation and key management, and some TEEs only support specially written code. Availability can also vary across cloud regions.

Despite these factors, confidential computing remains a valuable solution for secure data collaboration and privacy-focused workloads.

Confidential computing enables secure processing of sensitive data across industries. Common use cases include:

Healthcare Collaborations

Hospitals and research institutions can analyze patient data or run joint studies without exposing sensitive health information.

Financial Services

Banks, insurers, and financial institutions can share insights or train models on customer data without revealing raw records.

Supply Chain and Manufacturing

Vendors can analyze operational performance or production data while keeping proprietary information confidential.

AI and Model Evaluations

Data owners can test AI models on their own datasets without downloading, sharing, or reverse-engineering sensitive data or models.

All these use cases rely on TEEs, ensuring computations occur in a protected enclave so data and models remain secure and confidential.

Duality uses Trusted Execution Environments (TEEs) as part of a platform designed for secure data collaboration and privacy-preserving AI.

TEEs work alongside other Privacy-Enhancing Technologies (PETs), including fully homomorphic encryption (FHE), federated learning (FL), and differential privacy (DP).

With Duality, organizations can work on sensitive or regulated data without exposing it to collaborators or infrastructure providers.

Common applications include:

For example, a pharmaceutical company can run drug trials across multiple hospitals, or a bank can detect fraud patterns across institutions, all without sharing raw data.

Duality is integrated with AWS Nitro Enclaves and Google Cloud Confidential Computing (Confidential VMs), enabling secure execution of analytics and AI workloads inside hardware-based TEEs.

We also handle tasks like attestation, key management, and policy enforcement, allowing users to focus on analysis and insights.

Duality enables organizations to use confidential computing to process sensitive data securely.

Our platform combines Trusted Execution Environments (TEEs) with other Privacy-Enhancing Technologies (PETs) and manages key tasks like attestation, key management, and policy enforcement.

This allows teams to focus on insights, analysis, and collaboration without exposing sensitive information or risking non-compliance.

Get started today by contacting our team or requesting a demo to see how Duality can help your organization work with sensitive data safely and efficiently.