Enterprises want better AI, but the best data is often the hardest to use. It’s spread across organizations, locked behind regulations, or too sensitive to copy into a central training environment.

Federated learning changes the default approach. Instead of moving data to a central location, you send the model to where the data already lives, train locally, and then share only model updates back to an orchestrator for aggregation. Done well, this enables privacy-preserving AI and secure data collaboration across silos – without exposing raw records.

Below are 7 practical federated learning applications you’ll see in the real world, plus what makes each one work.

What Is Federated Learning (And How Is It Different From Traditional ML)?

Enterprises often default to centralized training: copy data into one environment, then train models where everything is in one place. That works when data is easy to move.

Federated learning flips that model. Instead of collecting data centrally, you send the model to the data, train locally, and share only model updates back to an orchestrator for aggregation. The raw records stay inside the source environment.

A typical federated learning system works like this:

- A coordinator (or orchestrator) sends an initial model to each participant (for example, hospitals, banks, agencies, or edge devices).

- Each participant trains the model locally on its own dataset.

- Participants send back model updates (not raw data).

- The coordinator aggregates the updates (often using federated averaging) to improve the global model.

- The updated model is redistributed, and the cycle repeats.

This approach supports privacy-preserving AI and secure data collaboration, especially in regulated sectors where sensitive data cannot be centralized.

In practice, federated learning is often paired with additional privacy and security controls, such as secure aggregation and differential privacy.

These layers help reduce the risk of leaking sensitive information through model updates.

When Should You Use Federated Learning – And When Shouldn’t You?

Federated learning is a strong fit when the main blocker to better AI is data movement – legal, operational, or security constraints that make centralization impractical.

Use federated learning when:

- Data cannot move due to regulations, contracts, sovereignty rules, or security policy.

- Multiple parties need a shared model, but none can share raw data with the others.

- Edge or on-prem environments matter (latency, bandwidth, or data volume make constant uploads unrealistic).

- You need a governance-friendly way to collaborate while keeping ownership and control local.

- The participating datasets are broadly homogeneous (similar schemas, feature distributions, and data quality), so a single shared model can converge meaningfully without excessive customization.

Federated learning is usually not the best first option when:

- You can legally and safely centralize data, and the only goal is faster experimentation.

- The organization cannot support orchestration requirements (participant onboarding, monitoring, update validation).

- The use case needs tightly controlled, centralized labeling or fully consistent data pipelines that are hard to reproduce across participants.

- The goal is to join or harmonize heterogeneous datasets (different schemas, feature spaces, or populations), federated learning trains across silos, it does not magically reconcile incompatible data.

A practical rule: if centralized training is feasible, it’s often simpler. If centralization is blocked, federated learning becomes one of the most credible paths to production.

Most enterprise deployments are cross-silo federated learning (a small number of organizations), while consumer scenarios are usually cross-device (millions of endpoints).

Federated learning tends to show up anywhere teams need better models but can’t centralize sensitive data – these seven real-world applications are the most common places enterprises start.

1) Healthcare: Training on Patient Data Without Sharing Patient Data

Federated learning applications in healthcare are often the first examples people cite for a reason: healthcare data is both highly valuable for AI and highly restricted by policy, ethics, and regulation.

What federated learning enables:

- Hospitals can collaboratively train models across sites while keeping protected health information local.

- Models improve through diversity: different scanners, populations, and care pathways.

Common healthcare use cases:

- Medical imaging (e.g., detection or triage support across multiple hospitals)

- Predicting clinical deterioration or resource needs

- Rare disease pattern learning across institutions that can’t pool data

What to watch for:

- Variation in data quality and coding standards across hospitals (real-world heterogeneity)

- The need for strong governance on who can participate and how updates are validated

2) Financial Services: Fraud Detection Across Institutions (Without Pooling Transactions)

In finance, the core challenge is that fraud patterns cross organizations, but transaction data cannot be freely shared.

What federated learning enables:

- Multiple institutions can contribute to a shared fraud model while keeping customer transaction data in-house.

- Faster adaptation to evolving attack patterns (card testing, synthetic IDs, mule accounts).

Practical examples:

- Fraud and anomaly detection across banks or payment providers

- Anti-money laundering signal enrichment (with careful legal and compliance design)

- Risk models that benefit from broader patterns without disclosing individual records

What to watch for:

- Strong defenses against model poisoning (malicious or compromised participants sending bad updates)

- Careful auditability for regulated model risk management

3) Government and Defense: Collaboration Across Agencies with Data Sovereignty Intact

Government and defense teams often face the sharpest version of the problem: data is sensitive, classified, or restricted – but mission outcomes depend on cross-unit collaboration.

What federated learning enables:

- Cross-agency model training where each party keeps its datasets inside its own boundary.

- A scalable way to build shared capabilities while respecting security policies.

Common applications:

- Threat intelligence models trained across separate environments

- Cybersecurity detection models improved across multiple networks

- Investigations and analysis where data cannot be centralized (think “work with it where it sits”)

What to watch for:

- Deployment architecture, attestation, and strict control over model distribution

- Clear approval paths and security controls for every participating node

4) Insurance: Fraud, Underwriting, And Claims Without Exposing Sensitive Data

Insurance is a natural fit for federated learning because the most valuable signals sit inside highly sensitive datasets: policyholder financial records, claims histories, and in many cases health-related information.

At the same time, insurers operate across business lines, regions, and partners (brokers, reinsurers, third-party administrators), which makes centralizing data difficult from both a compliance and governance standpoint.

What federated learning enables:

- Better fraud detection without centralizing claims data. Multiple entities (business units, subsidiaries, or partners) can contribute to a shared fraud model while keeping raw claims and customer records local.

- More accurate underwriting and pricing models across regions. Local datasets often capture different risk patterns, regulations, and behaviors. Federated learning can improve model generalization by learning from that diversity without requiring data pooling.

- Cross-border operations with stronger data control. When data residency and transfer rules vary by country, federated approaches can help keep sensitive data inside the required boundary while still contributing to a global model.

Common insurance use cases:

- Claims fraud detection: Identifying suspicious claim patterns that are hard to spot from a single book of business.

- Underwriting and dynamic pricing: Improving risk assessment while minimizing exposure of sensitive applicant and policy data.

- Claims triage and automation: Prioritizing claims, routing to adjusters, and flagging missing documentation based on patterns learned locally.

- Regulatory and reinsurance reporting support: Improving consistency of risk insights without centralizing the underlying customer-level data.

What to watch for:

- Model governance and auditability. Insurance models often fall under strict model risk and regulatory scrutiny. You need clear lineage for who participated, what was trained, and how updates were validated.

- Privacy risk from updates. Even when raw data stays local, model updates can leak information without additional protections. Secure aggregation and differential privacy can reduce that risk, but they must be designed into the system.

- Data drift and heterogeneity. Claims and underwriting data can vary widely across product lines and geographies, which can affect convergence and stability if not handled carefully.

- Partner trust boundaries. If training spans multiple organizations (for example, insurer + reinsurer), you need explicit rules for participation, update validation, and what each party receives.

5) Manufacturing: Predictive Maintenance And Supply Chain Insights Across Sites

Manufacturing often operates in distributed environments: multiple plants, lines, suppliers, and service vendors – each with its own operational telemetry, quality metrics, and proprietary process knowledge.

Centralizing this data can introduce IP exposure risk, create new attack surfaces, and slow down collaboration, especially when partners have different security standards or work across borders.

Federated learning is one of the most practical ways to train models across these environments while keeping sensitive operational data local.

What federated learning enables:

- Predictive maintenance across plants without sharing raw machine data. Instead of exporting sensor logs and maintenance histories to one place, each site can train locally and contribute updates to a shared model that improves failure prediction.

- Quality and defect detection across production lines. Plants can learn collectively from different defect patterns, camera setups, and materials while keeping production and inspection data inside each facility.

- Cross-organization collaboration without exposing IP. Vendors and suppliers can participate in model improvement without receiving raw process metrics, recipes, or proprietary production parameters.

Common manufacturing use cases:

- Predictive maintenance: Reducing unplanned downtime by learning failure signatures across equipment fleets.

- Quality control: Improving anomaly detection or defect classification from imaging or sensor systems across multiple lines or sites.

- Process optimization: Learning from distributed operational data to reduce scrap, improve yield, or stabilize throughput.

- Supply chain risk and forecasting: Training models across distributed partners where sharing detailed inventory or demand data is sensitive.

What to watch for:

- Federated learning and edge computing constraints. Many manufacturing setups rely on edge systems where bandwidth is limited and data volumes are large. Communication-efficient training (fewer rounds, compression) matters to keep systems practical.

- Operational reliability. Plants may have inconsistent connectivity or maintenance windows. A federated learning system must tolerate participants going offline without breaking the training process.

- Non-identical environments. Different machines, sensor types, and production conditions create non-uniform data. This is normal in real-world federated learning, but it requires careful evaluation and monitoring.

- Security posture across partners. If suppliers or vendors participate, the overall system is only as strong as the weakest participant. Validation, access control, and update screening become critical.

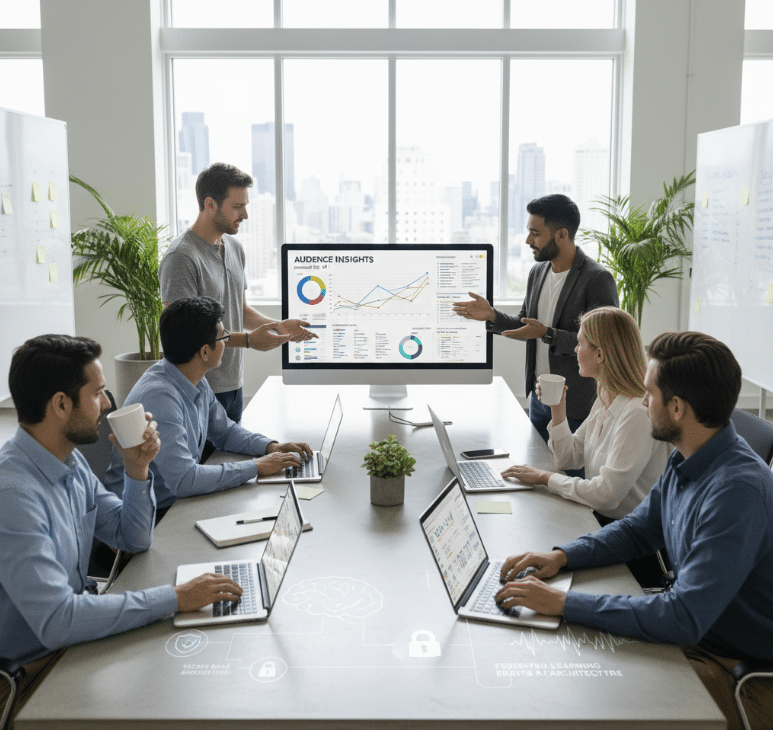

6) Marketing: Privacy-Preserving Audience Insights And Measurement Across Partners

Marketing depends on data-driven insights: audience segmentation, conversion measurement, churn modeling, and personalization.

But marketing data is increasingly governed by privacy regulations and data handling restrictions, and it often sits across multiple parties (brands, agencies, retailers, publishers, and ad-tech providers). That combination makes centralizing data both risky and slow.

Federated learning is relevant here when the goal is to build better models across distributed customer signals while keeping sensitive consumer data inside each organization’s boundary.

What federated learning enables:

- Collaborative modeling without sharing raw customer-level data. Partners can train shared models for segmentation or propensity while keeping identifiers and sensitive attributes local.

- Better measurement where data can’t be pooled. When organizations can’t legally or operationally combine datasets, federated learning can still support model improvement by aggregating updates rather than raw events.

- More compliant personalization approaches. Models can learn from distributed behavior patterns without requiring broad data replication into a central environment.

Common marketing use cases:

- Propensity and retention modeling: Learning patterns of churn or repeat purchase across distributed datasets.

- Targeted offers and segmentation: Building audience models that improve personalization while respecting privacy boundaries.

- Cross-partner analytics: Improving performance modeling when data is split across a brand, retailer, and agency stack.

- Conversion and funnel optimization: Enhancing predictive models where the full user journey is fragmented across systems.

What to watch for:

- Don’t over-claim privacy. Federated learning helps because raw data stays local, but marketing data is particularly sensitive. Use additional privacy protections and strict governance to avoid leakage through updates.

- Incentives and trust boundaries. Marketing ecosystems often involve multiple organizations with competing interests. Participation rules, model ownership, and outcome sharing must be defined upfront.

- Data consistency and definitions. Different teams may track “conversion,” “active user,” or “retention” differently. Federated learning doesn’t automatically fix inconsistent definitions, so align metrics and labeling where possible.

- Cross-device examples are not the same as enterprise collaboration. Some of the best-known federated learning examples come from consumer devices (on-device personalization). Enterprise marketing collaborations are usually cross-silo and require stronger governance and auditing.

7) Data Service Providers: Secure Data Collaboration Without Giving Away Raw Data Or Model IP

Data service providers sit at the center of modern AI: they aggregate, prepare, analyze, or commercialize data and analytics for customers.

But monetizing data and models is hard when customers can’t send sensitive datasets – and providers can’t expose proprietary pipelines or model IP.

This is a strong real-world application of federated learning because it addresses a practical constraint: both sides want value, but neither side can safely “hand over” the raw assets.

What federated learning enables:

- Training and tuning with protected data boundaries. Customers can keep sensitive datasets in their environment while still participating in training or fine-tuning workflows through model updates.

- Collaboration without raw access. Data providers can deliver insight-driven models without taking custody of customer records or exposing internal data assets.

- Partnership-led modeling at scale. Providers can support multiple customers or partners across sectors while keeping each dataset governed locally.

Common data service provider use cases:

- Data-driven model improvement: Providers improve models using distributed customer data signals without centralizing raw data.

- Customer-specific personalization: Adapting a provider’s model to each customer’s environment while maintaining protections for both data and model IP.

- Analytics-as-a-service with stronger controls: Supporting analysis, joins, or feature generation workflows where customers require strict guarantees that data is not exposed.

- Public sector and regulated customer engagements: Supporting collaborations where procurement, confidentiality, or policy constraints block traditional data sharing.

What to watch for:

- Clear ownership and governance. Who owns the global model? Who can retrain it? What happens if a customer leaves? These questions must be answered early to avoid operational and legal friction.

- Security against poisoning and manipulation. Providers that run multi-tenant collaboration workflows must defend against malicious updates and enforce validation rules.

- Auditability and compliance evidence. Customers in regulated sectors will need documentation of controls, training processes, and protections – not just architecture diagrams.

- Related “fleet” patterns. Similar dynamics show up in fleet environments (including automotive), where learning benefits from broad real-world experience but raw telemetry is sensitive and high-volume. Federated learning can support shared improvement without centralizing every raw event.

What Does It Take To Run Federated Learning At Scale (System Design Essentials)?

In real deployments, federated learning is not just an algorithm. It’s a distributed system that has to be secure, reliable, and auditable.

Key requirements for federated learning at scale include:

- Secure aggregation so the coordinator can combine updates without inspecting individual contributions.

- Privacy protection (often differential privacy) to reduce the risk of information leakage from updates.

- Robustness against poisoning, sybil attacks, and unreliable participants.

- Communication efficiency (compression, fewer rounds, partial participation) to reduce bandwidth and training time.

- Compliance and auditability so you can answer who trained what, when, and under which controls.

In other words: federated learning is the “what,” but the system design is the “how” that makes it workable for regulated, high-stakes environments.

How Duality Helps Teams Deploy Federated Learning In Regulated Environments

Federated learning is most valuable when sensitive data cannot be centralized. But real-world deployments demand more than algorithms—they require enterprise-grade security, governance, auditability, and operational reliability.

Duality enables secure data collaboration so teams can train, validate, and operate federated models across organizational boundaries while keeping data controlled at the source. Beyond the core federated workflow, the platform addresses the hard parts that usually stall production deployments:

Easier Deployment

Implementing federated learning across multiple organizations is operationally complex. Duality streamlines participant onboarding, orchestration, and execution with a purpose-built platform that reduces friction and shortens time to production.

Security

Duality supports multiple Privacy-Enhancing Technologies (PETs), allowing teams to combine federated learning with complementary techniques such as Trusted Execution Environments (TEEs) and Differential Privacy to meet strict security and regulatory requirements.

Scale and Performance

Built on NVIDIA FLARE, the platform delivers robust scalability and high performance, supporting enterprise-grade federated workloads without sacrificing reliability or control.

Governance

Duality adds a sophisticated governance layer that gives data owners full visibility and control—who participates, what runs, how results are shared, and how compliance is enforced, backed by auditability suitable for regulated environments.

If you’re evaluating federated learning for healthcare, financial services, insurance, government, manufacturing, marketing, or data partnerships, Duality can help you define the right collaboration architecture, controls, and operating model for production.

Book a demo to see how secure collaboration can work in your environment.