Fully Homomorphic Encryption (FHE) is an encryption method that lets you perform computations directly on encrypted data without decrypting it first.

The output of the computation is also encrypted, and when the data owner decrypts it, they get the same result they would have gotten if the computation had been performed on the original plaintext data.

In practical terms, FHE solves a core security and privacy problem: today, most systems encrypt data at rest (in storage) and in transit (over networks), but they still need to decrypt data to use it for analytics, AI, or processing.

FHE keeps the data encrypted while it is being processed, which is why it is often described as encryption for data in use.

This capability is valuable when sensitive information must be processed in environments that should not have access to plaintext, such as cloud platforms, third-party analytics services, or multi-organization collaborations.

With FHE, an untrusted compute environment can run approved computations and return encrypted results, without learning the underlying data.

In short: FHE lets an untrusted system compute on encrypted inputs and return an encrypted result that only the key holder can decrypt.

Here’s a simple explanation you can reuse internally:

FHE is encryption that still lets you compute. You encrypt your data, send the encrypted version to someone else (like a cloud service), and they can run calculations on it without decrypting it.

The result they send back is still encrypted. Only you can decrypt the answer. So you get the benefits of cloud compute or collaboration without handing over readable data.

This framing is consistent with standard definitions: compute happens on ciphertexts, outputs remain encrypted, and decrypting yields the same result as plaintext computation.

Because the computer (or cloud service) can do useful work without ever seeing the underlying inputs.

A helpful mental model is: the data owner encrypts input x, sends Enc(x) to an untrusted environment, that environment computes Enc(f(x)), and only the data owner can decrypt to get f(x).

This is a fundamentally different trust assumption than “encrypt it, send it, decrypt it in the cloud, and hope the cloud is secure.”

Cryptography researchers often describe this idea as “computing on encrypted data,” and it’s been a long-running goal because it reduces how often we need to rely on operational controls (access management, segmentation, monitoring) to prevent plaintext exposure.

Traditional encryption is excellent at preventing data exposure when data is stored or transmitted. But it does not solve this requirement:

“I want you to compute my data, but I do not want you to learn my data.”

FHE is one of several privacy enhancing technologies (PETs) designed to reduce data exposure during collaboration and analytics, especially when policy controls alone are not enough.

In real systems, this shows up as common business and government constraints:

Fully homomorphic encryption is designed specifically to reduce or eliminate the need to decrypt for processing, which is a major reason it is often framed as “encryption for data in use.”

“Homomorphic encryption” is the umbrella term. FHE is the strongest form.

There are several categories commonly referenced:

This distinction matters because many “homomorphic encryption” explanations online stop at the big idea and don’t clarify what you can actually run, at what complexity, and with what operational constraints.

Unlike conventional encryption (where ciphertext is simply unreadable and not designed for manipulation), FHE ciphertexts are structured so certain algebraic operations on the ciphertext correspond to meaningful operations on the underlying plaintext.

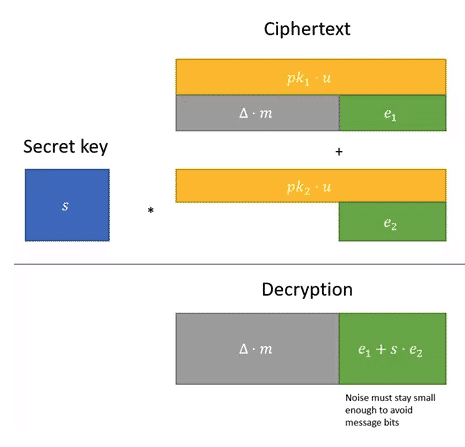

FHE schemes are probabilistic. Every time you encrypt data, a small amount of mathematical “noise” is intentionally added. As a result, encrypting the same plaintext twice produces different ciphertexts. This property, known as probabilistic encryption, prevents attackers from recognizing patterns in encrypted data and strengthens overall security.

The evaluator takes encrypted inputs and performs operations on them (often modeled as arithmetic or boolean circuits).

Each encryption and each computational step increases the internal noise inside the ciphertext. This noise is fundamental to the security assumptions behind most lattice-based FHE schemes, but it must be carefully controlled during computation.

The output of the computation remains encrypted. Only the party holding the secret key can decrypt it and recover the result which will match the output that would have been produced by running the same function on the original plaintext inputs.

As computations continue, accumulated noise increases. If it grows beyond a certain threshold, decryption will fail.

To support deeper computations, practical FHE schemes use techniques such as bootstrapping, which “refresh” a ciphertext by reducing its noise so additional computation can proceed safely.

In practice, this means FHE systems must balance functionality, performance, and noise management to ensure correct results without exposing plaintext.

Fully Homomorphic Encryption was a long-standing open problem in cryptography: while partially homomorphic systems existed (supporting only addition or only multiplication), constructing a scheme that could evaluate arbitrary computations on encrypted data remained out of reach for decades.

In 2009, cryptographer Craig Gentry (then at Stanford University) published the first viable construction of fully homomorphic encryption in his PhD work.

A key idea in Gentry’s construction is bootstrapping, a technique that can “refresh” a ciphertext by reducing accumulated noise, so additional computation remains possible even as operations compound.

Many widely used FHE schemes today are based on lattice-based cryptography, including constructions related to Learning With Errors (LWE) and Ring Learning With Errors (RLWE).

Based on current knowledge, these assumptions are widely viewed as strong candidates for post-quantum–resistant cryptography, which is why FHE is often discussed alongside post-quantum security.

Several practical FHE scheme families are commonly referenced:

These schemes differ in how they represent encrypted values, manage noise growth, and support different types of arithmetic (exact integers vs approximate real-number computation). The high-level principle remains the same: computation can be performed on ciphertexts, while plaintext stays hidden.

Because FHE changes the compute model, not just the encryption layer.

Even if you ignore cryptographic details, teams run into several practical realities:

A useful way to frame it: FHE is not “turn on encryption in a database.”

It’s closer to adding a specialized secure-compute layer into a workflow and deciding which computations truly need that level of protection.

FHE is a powerful tool, but it is not the right answer for every privacy or security requirement.

The fastest way to evaluate fit is to be precise about four things: the computation, the data, the trust boundary, and the acceptable performance.

Start by naming the specific operation you need to run on sensitive data. FHE is usually a better fit for well-defined, repeatable computations than for large, interactive applications.

Good candidates often include:

If the workload requires heavy branching logic based on private inputs (for example, lots of conditional logic, loops with unknown iteration counts, or complex data-dependent memory access), FHE may be difficult to implement efficiently.

FHE is most valuable when the party doing the computation should not be trusted with plaintext. Common threat models include:

If your primary risk is internal misuse, misconfigured access, or uncontrolled data copies, you may get more immediate value by tightening governance and architecture first – and then using FHE for the highest-risk compute steps.

FHE introduces overhead compared to plaintext computation. In practice, the right question is not “Is it slow?” but “Is it fast enough for this workflow?”

A useful way to frame requirements:

Many teams focus only on keeping inputs encrypted. In regulated environments, you may also need to protect:

A strong design includes both cryptography and policy: define what results are allowed to be decrypted, by whom, and under what auditing controls.

FHE is most compelling when you need useful computation under strict confidentiality. Common patterns include:

Organizations can compute metrics, train statistical models, or run analysis on encrypted datasets held by another party (or in a cloud environment).

FHE can be used for privacy-preserving model evaluation (inference) where inputs remain encrypted. It can also support some training workflows depending on complexity and the scheme used.

In some architectures, teams pair FHE with federated learning so models can be trained across data silos while keeping raw data local, and encrypting selected computations or model updates when additional confidentiality is required.

When data cannot be pooled due to legal, contractual, or sovereignty constraints, FHE can support collaborative computation while minimizing exposure of raw data.

The important nuance: FHE enables confidentiality during processing, but you still need governance around what results may reveal (for example, whether output itself could be sensitive).

FHE is most useful when organizations need to compute on sensitive data but cannot share raw records or expose plaintext during processing.

In regulated industries, that requirement is common.

Government teams often need to collaborate across agencies, contractors, or partner nations while keeping datasets restricted. FHE can support approved computations where each party can contribute data for analysis without providing direct access to the underlying records.

Common examples include:

Healthcare data is both highly regulated and highly valuable for analytics. FHE can help teams compute on protected health information while reducing exposure during processing.

Practical examples include:

Financial services frequently need to analyze sensitive customer and transaction signals while limiting who can access raw data. FHE can help isolate plaintext from the compute operator while still enabling specific computations.

Common examples include:

The best use cases are the ones where you can define the computation clearly, measure performance requirements upfront, and control what outputs are decrypted and shared.

FHE is powerful, but it is not the default solution for every secure compute requirement.

You generally should not start with FHE if:

Teams evaluating privacy-preserving AI often consider multiple approaches.

Confidential computing is often used as a practical baseline in enterprise secure compute designs, because it protects data in use with hardware-backed isolation while keeping software workflows relatively familiar.

Here’s a clear comparison at the level most decision-makers need.

Practical difference: TEEs can often be faster and more general-purpose; FHE can provide stronger “data never decrypts in the compute environment” guarantees.

In practice, real deployments often combine techniques (for example: MPC for key management or distributed trust, FHE for specific compute steps), depending on governance and threat model.

If your stakeholders are asking “Which one is best?”. The right answer is usually: “Best for which workload, threat model, and operational constraints?”

False. FHE protects plaintext during computation, but it does not automatically prevent information leakage from outputs.

If you compute something overly specific — or allow unrestricted queries — sensitive information can still be inferred from decrypted results. Cryptography protects data while it is processed, but it does not decide which computations should be allowed to run in the first place.

That is where governance becomes critical.

FHE is a powerful mechanism for protecting data and models from exposure in untrusted environments, but organizations still need strong governance controls to define:

Without these controls, you can build a technically secure system that still produces privacy risks through misuse or over-permissive analytics.

For high-risk analytics, techniques such as differential privacy can be layered on top of encrypted computation to limit what can be inferred about individuals from outputs. Combining cryptographic protection with governance and statistical safeguards creates a more complete privacy-preserving architecture.

It makes a major class of attacks harder by removing plaintext from the compute operator, but you still need:

FHE has moved far beyond theory, but it is still a specialized technique. The best indicator of maturity is not hype – it’s whether your target workloads can be expressed efficiently and validated end-to-end with acceptable cost and latency.

FHE is a family of schemes and implementations. Different schemes support different data types (integers, approximate real numbers, boolean gates) and have different performance profiles.

If you don’t define this, you can over-engineer – or choose the wrong PET.

Be specific:

Key ownership determines who can decrypt outputs. You want clear answers on:

Some workflows need to protect:

Many explanations focus only on protecting input data, but in regulated investigations or competitive analytics, the model itself can also be sensitive.

Fully Homomorphic Encryption is a practical direction for organizations that want to use sensitive data for analytics and AI while reducing exposure in untrusted environments.

It is not a general replacement for all security controls. It is a way to change the trust boundary: the compute environment can be treated as less trusted because plaintext never needs to appear there.

If your organization handles regulated, classified, or highly sensitive data – and you still need to compute on it at scale – FHE is one of the most important privacy-preserving tools to understand and evaluate.

If you want to see how privacy-preserving AI can work in your environment, the next step is a short technical discovery: your target workload, your threat model, and what “good” performance looks like.

Successfully deploying Fully Homomorphic Encryption requires more than understanding the mathematics. It requires engineering encrypted computation pipelines that meet performance targets, integrate with existing systems, and operate within defined governance and compliance frameworks.

Duality has played a central role in making FHE practical for real-world environments. Duality solutions are deployed in production systems where sensitive data must be processed without exposing plaintext during computation, including highly regulated sectors with strict operational constraints.

A clear example is Duality’s Zero-Footprint Insights (ZFI) use case. ZFI is built on FHE and enables secure analytics and collaboration across organizations that cannot share raw data. It shows how encrypted computation can support cross-boundary data use while maintaining confidentiality and policy enforcement.

Duality is also a driving force behind OpenFHE, the leading open-source FHE library used by researchers and commercial teams worldwide. By contributing directly to the core cryptographic infrastructure, Duality helps advance performance, strengthen security, and improve the usability of FHE implementations across the ecosystem.

Organizations evaluating FHE need answers to concrete questions:

Duality works with government, healthcare, and financial institutions to translate these questions into deployable architectures, moving from feasibility assessment to secure production implementation.

If your organization needs to compute on sensitive data without exposing it during processing, the starting point is a focused evaluation of your workload, threat model, and operational requirements.