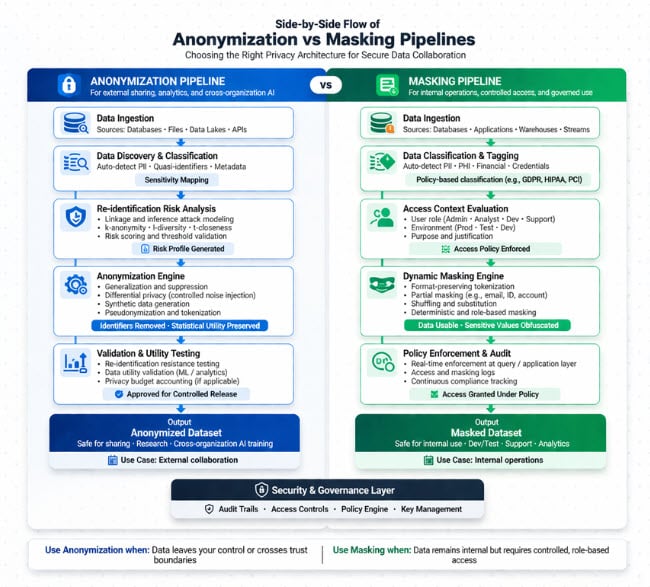

Choosing between data anonymization vs data masking defines how your organization balances privacy risk, regulatory exposure, and data usability.

Modern data environments make this decision unavoidable. Organizations are expected to extract value from sensitive datasets while simultaneously complying with strict regulations like GDPR and HIPAA. At the same time, data is no longer confined to a single system but moves across cloud platforms, analytics pipelines, AI workflows, and external partnerships. This creates a fundamental tension: how do you enable data use without exposing the underlying individuals or entities? Anonymization and masking are two of the most widely used approaches to solve this, but they operate under very different assumptions about trust, control, and risk.

What Is Data Anonymization?

Data anonymization transforms datasets so that individuals cannot be identified by any reasonably available means. This includes removing not only direct identifiers (names, IDs) but also indirect identifiers that could enable linkage attacks.

In practice, anonymization is used when data must leave its original trust boundary to be shared externally, published, or used in multi-party environments.

Core Characteristics

- Irreversible by design

- Removes direct and quasi-identifiers

- Focuses on preventing re-identification

- Often reduces data granularity

Common Techniques

- Generalization (e.g., exact age → range)

- Suppression (removing fields entirely)

- Noise injection for statistical protection

- Differential privacy for formal guarantees

Most “anonymized” datasets are only conditionally anonymous. If external datasets exist, re-identification becomes possible without rigorous controls.

Where It Fits

- Cross-organization data sharing

- Public datasets and research

- AI training across jurisdictions

- Regulatory-driven data release

What Is Data Masking?

Data masking replaces sensitive values with synthetic or obfuscated equivalents while preserving the dataset’s structure and usability.

Unlike anonymization, masking assumes the data remains within a controlled environment where some level of trust still exists.

Core Characteristics

- Often reversible (depending on method)

- Preserves format and schema

- Maintains operational usability

- Applied dynamically or statically

Common Techniques

- Tokenization (mapped replacements)

- Substitution (realistic fake values)

- Shuffling within datasets

- Format-preserving encryption

Masking is a data control mechanism, not a privacy guarantee. It limits exposure but does not eliminate risk.

Where It Fits

- Development and QA environments

- Internal analytics workflows

- Customer support systems

- Role-based data access

Data Anonymization vs Data Masking: Key Differences

At a high level, the distinction comes down to intent and trust boundaries. Anonymization is designed to eliminate identity risk entirely, while masking focuses on reducing exposure while preserving usability within controlled environments.

Core Differences (Simplified)

| Dimension | Data Anonymization | Data Masking |

| Reversibility | Irreversible | Often reversible or linkable |

| Privacy Strength | High (if properly implemented) | Moderate and context-dependent |

| Data Utility | Reduced due to transformation | High, retains operational value |

| Typical Use Case | External sharing, research, AI collaboration | Internal systems, testing, controlled access |

| Risk Profile | Vulnerable to linkage attacks if poorly designed | Vulnerable to insider threats and key compromise |

When Should You Use Data Anonymization?

Use anonymization when data leaves your control or crosses trust boundaries. This includes any scenario where you cannot enforce strict access controls or contractual guarantees.

In these cases, the data itself must carry the privacy protection.

Best-Fit Scenarios

- Sharing datasets with external partners

- Publishing research or open data

- Training models across institutions

- Operating under strict regulatory frameworks

Practical Constraint

Anonymization often reduces data fidelity. This directly impacts:

- Model accuracy in machine learning

- Granularity in analytics

- Ability to perform record-level analysis

If your use case requires row-level traceability or exact values, anonymization will likely break it.

When Should You Use Data Masking?

Use masking when data remains inside a controlled environment, but you want to reduce exposure risk across systems or user roles.

Masking is particularly effective when you need realistic data behavior without exposing actual sensitive values.

Best-Fit Scenarios

- Application testing with production-like data

- Internal dashboards and analytics tools

- Customer service interfaces

- Fine-grained access control systems

Practical Constraint

Masking depends heavily on:

- Access control enforcement

- Key management (for tokenization/encryption)

- Insider risk mitigation

If these controls fail, masked data can often be reversed or inferred.

The Real Trade-Off: Privacy vs Data Utility

The decision between data anonymization vs data masking is fundamentally about how much risk you are willing to accept versus how much utility you need.

In Practice

- Anonymization prioritizes privacy guarantees

- Masking prioritizes operational usability

This creates a structural tension:

- High privacy → reduced analytical precision

- High usability → increased exposure risk

Where Organizations Go Wrong

- Using masking for external data sharing

- Over-anonymizing datasets needed for ML

- Ignoring how datasets can be recombined externally

The biggest risk is not in the transformation itself, but in how the data is later combined, accessed, and interpreted.

Limitations You Cannot Ignore

Both approaches have failure modes that are often overlooked in high-level comparisons.

Anonymization Risks

- Re-identification via auxiliary datasets

- Difficulty proving true anonymity

- Loss of utility for advanced analytics

- Irreversible data transformation errors

Masking Risks

- Insider threats and privilege escalation

- Weak tokenization or key management

- Pattern leakage in format-preserving encryption

- Overexposure in poorly segmented systems

These are not edge cases, they are common failure points in production systems.

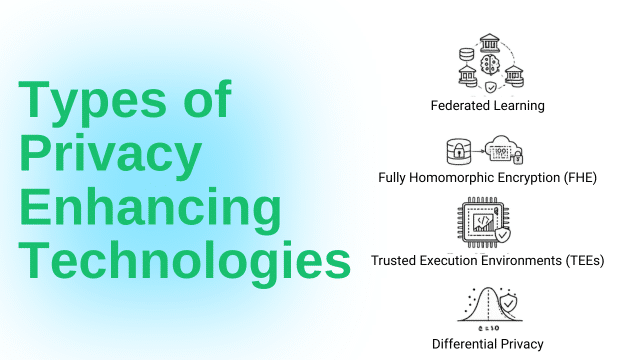

Beyond the Binary: PETs and Secure Data Collaboration

Modern data architectures increasingly avoid the masking vs anonymization trade-off entirely by using privacy-enhancing technologies (PETs).

These approaches protect data during computation, not just at rest or in transit.

Key PET Approaches

- Federated Learning

Models are trained across distributed data sources without moving raw data - Homomorphic Encryption (FHE)

Enables computation directly on encrypted data - Trusted Execution Environments (TEEs)

Hardware-isolated environments for secure processing - Differential Privacy

Adds mathematically bounded noise to outputs

Why This Matters

Instead of transforming data to make it safe, PETs allow you to:

- Keep data in its original environment

- Enforce computation-level privacy

- Reduce the need for irreversible transformations

PETs shift the question from “how do we hide data?” to “how do we compute without exposing it?”

How to Choose: A Practical Decision Framework

Selecting between data anonymization vs data masking is an architectural choice that must align with your trust model, regulatory exposure, and how the data will actually be used over time.

Most failures happen when teams choose based on tooling instead of context. The right approach emerges from evaluating four dimensions together:

1. Trust Boundary: Where Does the Data Flow?

The most important question is whether data crosses a boundary where you lose control.

If data moves outside your organization into partner environments, research collaborations, or external platforms, you cannot rely on access controls or contracts alone. The data must be protected independently of the environment.

- Crossing organizations or jurisdictions

→ Use anonymization or privacy-enhancing technologies (PETs) - Remaining within controlled infrastructure

→ Masking can be sufficient, if paired with strong access controls

The nuance here is that “internal” does not always mean safe. Multi-cloud architectures, vendor integrations, and analytics platforms often behave like external trust zones in practice.

Treat any environment you do not fully control, including third-party SaaS and shared data platforms, as an external trust boundary.

Treat any environment you do not fully control, including third-party SaaS and shared data platforms, as an external trust boundary.

2. Data Sensitivity: What Is the Regulatory and Business Impact?

Not all sensitive data carries the same level of risk. The required protection depends on both regulatory classification and business consequences of exposure.

- Highly regulated data (health, financial, identity)

→ Requires strong, often irreversible privacy guarantees

→ Anonymization or PETs are typically expected - Moderately sensitive data (operational, behavioral)

→ Masking may be acceptable within controlled environments

However, sensitivity is not static. Combining datasets can increase sensitivity unexpectedly, especially when quasi-identifiers are present.

For example, location data combined with timestamps can become identifying even if each dataset alone appears safe.

Sensitivity must be evaluated at the dataset and ecosystem level, not just at the individual field level.

3. Required Data Fidelity: How Will the Data Be Used?

The more precisely you need to use the data, the harder it becomes to anonymize it safely.

Anonymization introduces distortion; sometimes subtle, sometimes severe. This is where many AI initiatives fail. Teams anonymize data aggressively, only to discover that:

- Models lose predictive power

- Feature relationships break down

- Edge cases disappear

At that point, they either revert to raw data (increasing risk) or accept degraded outcomes.

Core Insights

If your workflow depends on data relationships and distributions, test anonymization impact before committing to it.

4. Threat Model: Who Are You Defending Against?

Security decisions are meaningless without a clear understanding of who the attacker is and what capabilities they have.

Different techniques protect against different threat classes:

- External attackers or unknown recipients

→ Anonymization reduces risk even if data is exposed - Internal users, analysts, or engineers

→ Masking combined with governance and access control is more relevant

However, insider risk is often underestimated. Masked data can still be:

- Reconstructed via repeated queries

- Correlated across systems

- Exposed through weak access policies

At the same time, anonymization does not fully eliminate risk if attackers can link datasets together.

Putting It Together: Decision Mapping

These four factors should be evaluated together to guide your approach:

| Scenario | Recommended Approach |

| Data shared externally or across organizations | Anonymization or PETs |

| Internal systems with strict access control | Masking |

| AI/ML collaboration across parties | PETs + selective anonymization |

| Development and testing environments | Masking with synthetic or tokenized data |

Tooling and Enterprise Considerations

The effectiveness of data anonymization vs data masking is determined by how consistently, transparently, and contextually it is applied across your data ecosystem.

Most enterprises already have some form of masking or anonymization in place. The gap is that these controls are often fragmented, static, and disconnected from real data flows. As a result, protection breaks down at scale, especially in AI pipelines, cross-team analytics, and multi-cloud environments.

To evaluate tooling properly, you need to look beyond feature lists and focus on operational behavior under real conditions.

What to Look For in Enterprise-Grade Solutions

Policy-Driven Automation

At scale, you cannot rely on field-level configurations or one-off scripts. Protection must be:

- Defined as policies, not transformations

- Applied consistently across datasets and environments

- Automatically enforced as data moves through pipelines

This becomes critical in environments where:

- New datasets are constantly created

- Schemas evolve over time

- Multiple teams access the same data differently

Native Integration with Data Pipelines and ML Workflows

Most privacy tools operate at the database layer. That is no longer sufficient.

Modern architectures require protection that integrates with:

- Data lakes and warehouses

- Streaming pipelines

- Feature stores and ML pipelines

- Cross-organization data exchanges

If protection is not embedded into these workflows, teams will:

- Bypass controls for performance reasons

- Export raw data for offline processing

- Reintroduce sensitive data into downstream systems

Auditability, Compliance, and Data Lineage

Regulated environments require proof of protection.

Effective solutions must provide:

- Detailed audit logs (who accessed what, when, and how)

- Data lineage tracking across transformations

- Policy enforcement visibility

- Compliance reporting aligned with frameworks like GDPR and HIPAA

Without this, organizations cannot demonstrate control even if protections exist.