As data continues to grow exponentially, so does its potential to derive value. Ensuring that the data sets remain private compounds our ability to learn and gain insights from the wealth of data at our disposal. Privacy Enhancing Technologies (PETs) enable data scientists to derive powerful insights from large, valuable data sets without deleting or exposing sensitive records, in order to protect privacy and remain compliant.

In this blog, we will dive into six most common privacy enhancing technologies, as well as two additional tools that fall under the PETs umbrella.

(PET) Privacy Enhancing Technologies: The Classics

The exact definition of (PET) privacy enhancing technologies is still under debate. Generally speaking, privacy enhancing technologies include any technology (whether software or hardware) that allows sensitive data to be computed on, without revealing the underlying data. How this is achieved differs for each technique, whether it is software or hardware based, and the intended use.

For this subset, we defined “classic” privacy enhancing technologies as techniques, architecture, or infrastructure that does not modify the original inputs. Here are the top six classic privacy enhancing technologies:

| Name | Definition |

| Homomorphic Encryption | Data and/or models encrypted at rest, in transit, and in use (ensuring sensitive data never needs to be decrypted), but still enables analysis of that data. |

| Multiparty Computation | Allows multiple parties to perform joint computations on individual inputs without revealing the underlying data between them. |

| Differential Privacy | Data aggregation method that adds randomized “noise” to the data; data cannot be reverse engineered to understand the original inputs. |

| Federated Learning | Statistical analysis or model training on decentralized data sets; a traveling algorithm where the model gets “smarter” with every analysis of the data. |

| Secure Enclave/Trusted Execution Environment | A physically isolated execution environment, usually a secure area of a main processor, that guarantees code and data loaded inside to be protected. |

| Zero-Knowledge Proofs | Cryptographic method by which one party can prove to another party that a given statement is true without conveying any additional information apart from the fact that the statement is indeed true. |

Homomorphic Encryption

What is homomorphic encryption: a method which allows data to be computed on while it is still encrypted. Data and/or models are encrypted at all points in the data lifecycle: in rest, in transit, and in use.

What is homomorphic encryption used for: Computations and/or model training on sensitive data – especially between a data owner (or owners) and third parties; deploying encrypted models.

Drawbacks: Works best on structured data, requires customization of analytics, computationally expensive

Multiparty Computation

What is multiparty computation: Cryptography that allows multiple parties to perform joint computations on individual inputs without revealing the underlying data between them.

What is multiparty computation used for: Benchmarking on different data sets to produce an aggregated result.

Drawbacks: Parties can infer sensitive data from the output; each deployment requires a completely custom set up; costs are often high due to communication requirements.

Differential Privacy

What is differential privacy: A data aggregation method which adds randomized “noise” to the data; data cannot be reverse engineered to understand the original inputs.

What is differential privacy used for: Providing directionally correct statistical analysis, but not accurate or precise information.

Drawbacks: Limit to the number of and type of computations which can be performed – i.e., a reduction in data utility.

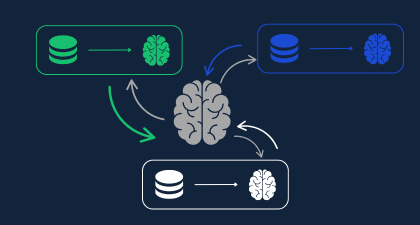

Federated Learning

What is federated learning: Federated Learning enables statistical analysis or model training on decentralized data sets via a “traveling algorithm” where the model gets “smarter” with every new analysis of the data.

What is federated learning used for: Secure model training or analysis on decentralized data sets. Learning from user inputs on mobile devices (e.g., spelling errors and auto-completing words) to train a model.

Drawbacks: Federated Learning has generated some hype, specifically since Google began embarking on research into incorporating FL into its AI.

Unfortunately, there are many drawbacks:

- Debatable privacy benefits:

- It is possible to reverse engineer the underlying data sets based on metadata revealed by the model once it’s complete

- Model known by all collaborating parties

- A large volume of data is required to gain insights

- High maintenance time investment: it’s complex to manage FL over distributed systems

Secure Enclave/Trusted Execution Environment

What is a secure enclave/trusted execution environment : A physically isolated execution environment, usually a secure area of a main processor, that guarantees code and data loaded inside to be protected.

What are secure enclave/trusted execution environments used for: Computations on sensitive data.

Drawbacks: Hardware dependent – i.e., trust is placed in the specific chip, not in the math used to encrypt and decrypt the data; data not encrypted while in the TEE.

Zero-Knowledge Proofs

What are zero-knowledge proofs: Two different parties can prove to one another that they know something about data without revealing the underlying data itself. (Prof. Shafi Goldwasser, invented ZKPs along with Silvio Micali and Charles Rackoff in 1985, which earned them a Turing Award in 2012.)

What are zero-knowledge proofs used for: Providing privacy to public blockchains; online voting; authentication.

Drawbacks: No exact guarantee of 100% truth in the claim; computations may require a lot of power to perform; high costs.

Data Privacy Tools Sometimes Categorized as Privacy Enhancing Technologies (PET)

Some listings of privacy enhancing technologies include data privacy tools which change the input data to conform to regulations (e.g., HIPAA) regarding Personally Identifiable Information (PII). We list them separately here because of a significant drawback: data devaluation. Changing or deleting data using one of the two techniques below enhances privacy, but also significantly degrades the quality of the data – and ultimately reduces the value of insights that can be drawn from it. By being unable to perform precise calculations on altered data, valuable insights are lost with every computation.

| Name | Definition |

| Synthetic Data | Artificially created data which is meant to represent real-world sensitive data. |

| Anonymized (De-identified) Data | Strips data of PII using techniques like deleting or masking personal identifiers with hashing, suppressing, or generalizing quasi-identifiers. |

Synthetic Data

What is synthetic data: Fully algorithmically generated data produced by a computer simulation that approximates a real data set.

What it is used for: Reducing constraints on using sensitive data in highly regulated industries or for software testing.

Drawbacks: Cannot do precise, accurate computations on individual data points; cannot be linked to real data.

Anonymized (De-identified) Data

What is anonymized data: Strips data of PII using various techniques to delete or mask personal identifiers.

What it is used for: Computations on sensitive data.

Drawbacks: Cannot do precise, accurate computations on individual data points; inability to link data sets; possible to re-identify PII. For example, in 2010, Netflix de-identified viewer data before sharing it publicly with contest participants for its Recommendation Contest. Hackers managed to re-identify some customers, resulting in a $1M lawsuit.

Choose PET Privacy Enhancing Solutions Based on Use Cases

As we have seen, some privacy enhancing technologies are better than others for specific use cases – whether that is statistical analysis or model training and tuning (combining Privacy Enhancing Technologies provides even more benefits.) But even in cases where the data itself is being changed, choosing to add extra layers of privacy to your data lifecycle is invaluable to your business: PETs, as a whole, are estimated to be able to unlock between $1.1T and $2.9T of value in underutilized data. So whether you have chosen to deploy one of the PETs listed above or chosen to combine PETs for even more benefits, you are on the path to realizing the value of your data.

Want to learn more? Join us for our webinar on “Maximizing the Value of Your Data.”